Microsoft Fabric: The Data Platform You Need Now

Microsoft Fabric unifies data integration, engineering, warehousing, real-time analytics, and BI in one platform — eliminating fragmentation and accelerating insights with AI.

Discover how Microsoft Fabric unifies data integration, engineering, warehousing, real-time analytics, and BI in one platform — eliminating fragmentation and accelerating insights with AI.

Al Rafay Consulting

· Updated April 9, 2026 · ARC Team

Microsoft Fabric is a unified analytics platform that promises a faster path to insights and AI by eliminating fragmented data stacks. Imagine fewer tools to stitch together, no more duplicating data, and consistent governance across your enterprise data — that is the Fabric vision.

In this blog, we will explore why Fabric delivers end-to-end value for both developers and business users. Along the way, we will include practical guidance and interactive elements to help you determine if Fabric is the right fit for your organization. Let us dive in!

Why Is Microsoft Fabric the Data Platform You Need Now?

The Cost of Fragmentation

Organizations today often struggle with fragmented data ecosystems — multiple ETL tools, separate warehouses, siloed BI systems — resulting in duplicate data and complex integrations. Data gets copied across systems and teams juggle disparate tools, which slows down insights and drives up costs.

Fabric’s Unified Solution

Microsoft Fabric emerges as a transformative solution to fragmentation, offering one comprehensive platform that unifies data integration, engineering, warehousing, real-time analytics, data science, and business intelligence in a single cloud service. This means all roles work over a shared OneLake foundation without needing to manually stitch together separate tools.

Business & Developer Value

A unified platform like Fabric translates to tangible benefits. Teams see reduced complexity, improved collaboration, and faster time to insight when they adopt Fabric’s unified analytics model. You no longer need to manage separate licenses or security models across assorted products — one platform handles it all.

OneLake: Your Unified Data Foundation (“OneDrive for Data”)

Every great structure needs a solid foundation — for Fabric, that foundation is Microsoft OneLake. Think of OneLake as “OneDrive for data”: a single, unified data lake that is automatically available in every Fabric tenant. It provides a central data repository for your entire organization, simplifying data management by eliminating the sprawl of multiple data lakes.

One Lake for All

Before OneLake, companies often created separate data lakes for each department or project — a nightmare for governance and collaboration. Now, every Fabric tenant automatically gets one OneLake, and you cannot accidentally create another. This enforced singularity means all teams put their data in the same logical lake (with workspaces and folders for organization), fostering collaboration on a sole source of truth.

Single Copy of Data — Zero Copy Architecture

OneLake is designed so that you do not need multiple copies of the same data for different analytics engines. In Fabric, a CSV you land in OneLake can be used by a Spark notebook, a SQL data warehouse, and a Power BI report without duplicating it for each tool — all engines operate on the common data in place. This zero-copy approach is possible because OneLake stores data in open formats (like Delta Parquet) that are accessible to various engines uniformly.

Built-in Governance and Security

Having one central data lake also means Fabric can govern by default. Any data placed in OneLake automatically inherits tenant-level security, compliance, and data management policies (thanks to integration with Microsoft Purview). OneLake’s architecture also supports workspace-level segmentation for distributed ownership: each Fabric workspace is like a folder in OneLake, with its own RBAC, so business domains can manage their data independently while still residing in the single lake.

Open Standard Support and Shortcuts

OneLake is built on Azure Data Lake Storage Gen2, so it speaks the same APIs and supports the same files and directories you are used to. It stores tabular data in an open format, so you are never locked in — you can even attach external storage via shortcuts (pointers to data in ADLS, AWS S3, etc.) to virtually bring outside data into OneLake without copying it. Shortcuts enable a “virtual data mesh” — different departments can keep data in separate accounts if needed, but OneLake will present it in one unified namespace for analytics.

Pick Your Build Path: Workloads in Fabric

One of the strengths of Microsoft Fabric is that it offers multiple analytics experiences (workloads) under one roof — each tailored to different personas and tasks — yet all connected via OneLake. Whether you are a data engineer or a BI analyst, Fabric lets you “pick your path” while remaining on the unified platform.

| Scenario | Recommended Workload |

|---|---|

| Building ETL pipelines or prepping big data | Data Engineering notebooks and Data Factory pipelines |

| Central analytics database for BI | Data Warehouse for a SQL-centric approach |

| Event streams or live dashboards | Real-Time Analytics (KQL) |

| Machine learning and model development | Data Science for integrated notebooks and ML ops |

| Dashboards and self-service reporting | Power BI, seamlessly integrated with the data platform |

The beauty of Fabric is that these are not silos — you can mix and match. A project might use a Spark notebook (Data Engineering) to clean data, save it to a lakehouse, then use a SQL Warehouse to create a star schema, and finally have Power BI dashboards on top — all within the same Fabric workspace.

All roads meet in OneLake, so each workload’s output becomes immediately available to the others when needed. All of this happens on one platform without jumping between services. Fabric’s different experiences share the same UI and security model, making it far easier to go end-to-end compared to patching together a dozen services.

From Data to AI Apps: Build an AI-Ready Solution on Fabric

So far, we have discussed individual pieces. Now let us put it all together in an end-to-end scenario. How do you go from raw data to an AI-powered application using Microsoft Fabric?

Scenario: Building an Intelligent FAQ Bot

Consider a retail company that wants to build an intelligent FAQ bot and analytics app for customer support data. They have support tickets and product info in files, and they want to use AI (LLMs) to answer customer questions based on that data. Here is how Fabric enables this through a series of steps:

- Ingest support tickets and product data into a OneLake lakehouse

- Transform raw data using Spark notebooks or Data Factory pipelines

- Index and chunk content for retrieval using Azure OpenAI embeddings

- Store embeddings in the Fabric SQL database or a vector index

- Query with RAG — the LLM answers questions grounded in company-specific context

- Surface insights through Power BI reports or a Copilot-powered application

Copilot + RAG in Fabric

Microsoft Fabric makes it feasible to implement Retrieval-Augmented Generation (RAG) entirely within the platform. An official quickstart shows how you can use Fabric’s built-in Azure OpenAI capabilities plus Azure AI Search to implement RAG: load data into a lakehouse, chunk it, generate embeddings with an OpenAI model, store or index those embeddings, and then use an LLM to answer questions with that context.

Fabric acts as the glue between your data and AI — everything from data prep to AI app deployment can happen within Fabric’s managed environment.

Build vs. Buy in Fabric

- Build from scratch: Use Fabric’s components (notebooks, pipelines, SQL DB, OpenAI) to assemble a custom AI solution. This offers maximum flexibility, control, and learning opportunities.

- Use prebuilt solutions: Microsoft and third-party accelerators built on Fabric provide out-of-the-box AI templates for common use cases — fast to deploy when customization is not required.

Fabric supports both paths and truly shines by enabling custom-built AI solutions without requiring a hodgepodge of different cloud services.

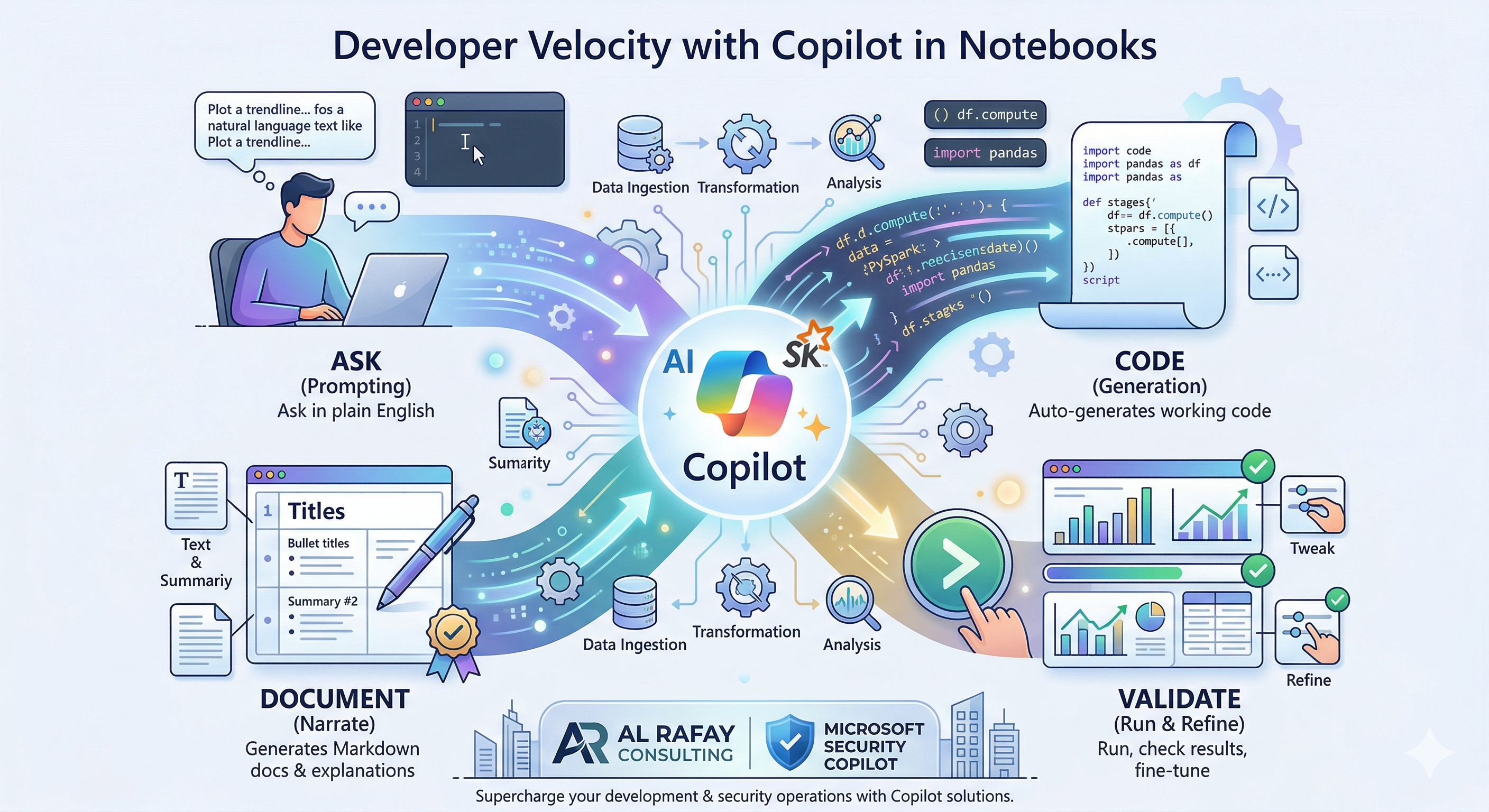

Developer Velocity with Copilot in Notebooks

For developers and data scientists, one of the most exciting Fabric features is Copilot in Fabric Notebooks — an AI assistant that dramatically accelerates your workflow by turning natural language prompts into working code, helping visualize data, and even generating documentation.

Ask → Code → Validate → Document

- Ask: In Fabric notebooks, you can simply ask Copilot to perform a task in plain English — “load this CSV into a DataFrame and show summary statistics” — and it will generate the code for you.

- Code: As you write, Copilot provides intelligent code completion and suggestions trained on Spark, Pandas, T-SQL, and more. Because the AI is trained with extensive knowledge, it frequently generates industry-standard solutions for data-related tasks — helping you learn better idioms and best practices.

- Validate: After Copilot generates code, run it in the notebook and validate the results. If tweaking is needed, describe the issue to Copilot and it adjusts — no context switching required.

- Document: Once code works, Copilot can generate markdown cells that explain the analysis, helping you produce well-narrated notebooks automatically. This helps you not just solve the problem but also present it.

Copilot accelerates every stage: from ingesting data, to transforming, analyzing, explaining results, fixing code issues, and documenting your process — all within a single notebook session.

Quick Quiz: Testing Your Copilot Knowledge

Which Copilot prompt would you use to quickly understand a DataFrame’s contents?

A. “Generate code to drop duplicates” — (This is for transformation, not directly for understanding.)

B. “Show the summary statistics of the DataFrame” — (Yes! This would have Copilot produce a .describe() or similar, helping you understand the data.)

C. “Plot sales by category” — (That is analysis/visualization of one aspect.)

If you chose B, you are correct — asking for summary stats is a great way to have Copilot help you validate and understand data.

Governance That Scales: Purview + Fabric

No enterprise data platform is complete without robust governance, and Microsoft Fabric has been built with governance in mind from day one. Microsoft Purview (the data governance service) is natively integrated into Fabric, which means you get enterprise-grade governance that scales with your data — without the usual friction.

Unified Catalog and Lineage: Fabric provides a OneLake Data Catalog that serves as a central inventory of all data items (lakehouses, tables, pipelines, BI reports, etc.) across the tenant. This provides full visibility into what data exists and where it flows.

Sensitivity Labels and Policies: Through Purview integration, you can apply sensitivity labels (e.g., Confidential, Public, GDPR personal data) to data in Fabric and those labels flow with the data. Downstream consumers inherit the same classification and restrictions.

Access Control and Ownership: Fabric uses the familiar security model of Azure AD and Power BI. Workspaces function as security boundaries; you can assign roles (viewer, contributor, admin) to users or groups.

Key Point: Governance is not an afterthought in Fabric — it is built into the Fabric architecture. Microsoft Purview provides unified data governance across Fabric, which helps maintain compliance and data trust even as your analytics usage scales up. Fabric keeps an eye on things for you.

Operating Fabric in Production: Cost, Capacity, and Reliability Patterns

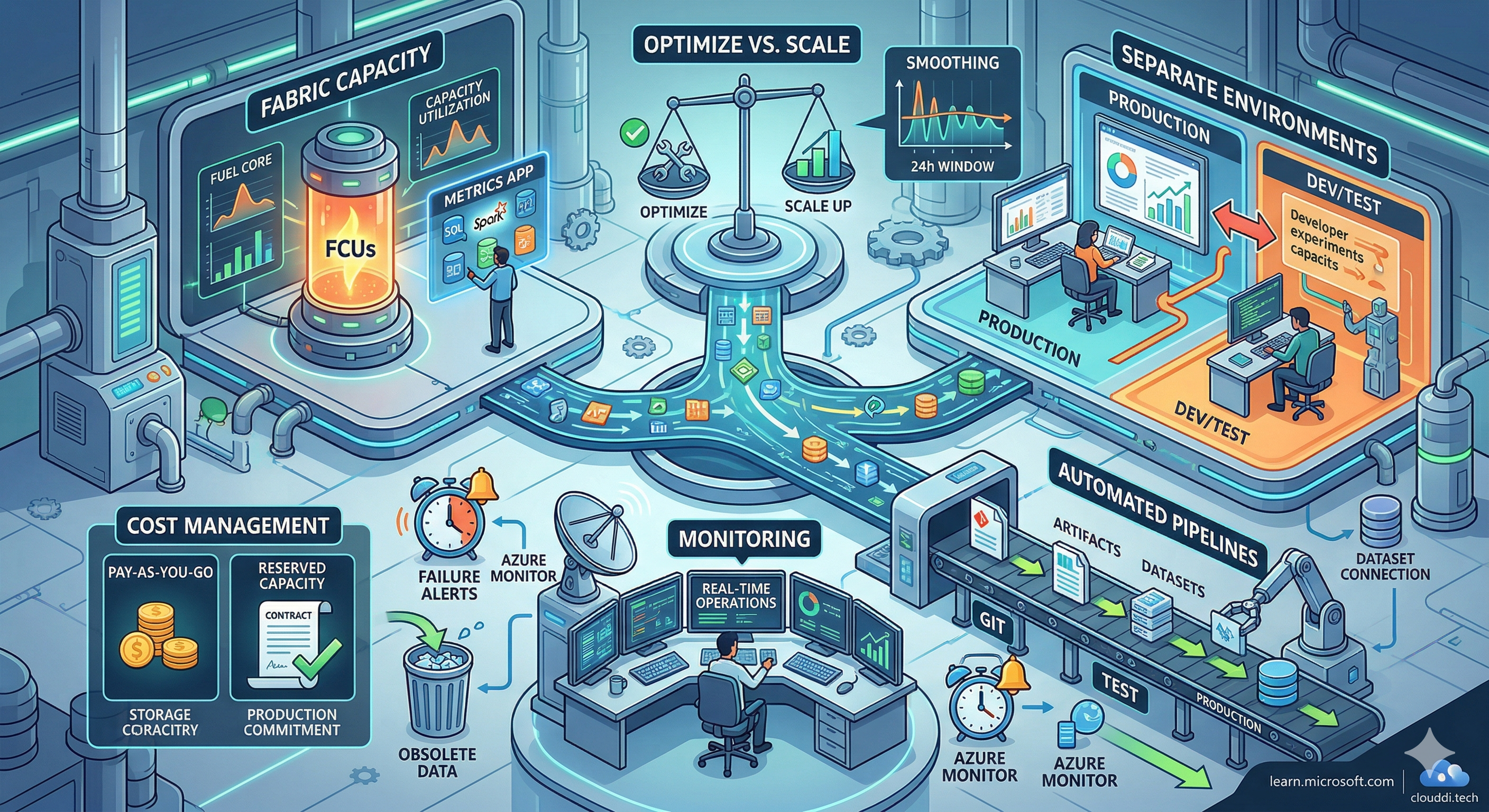

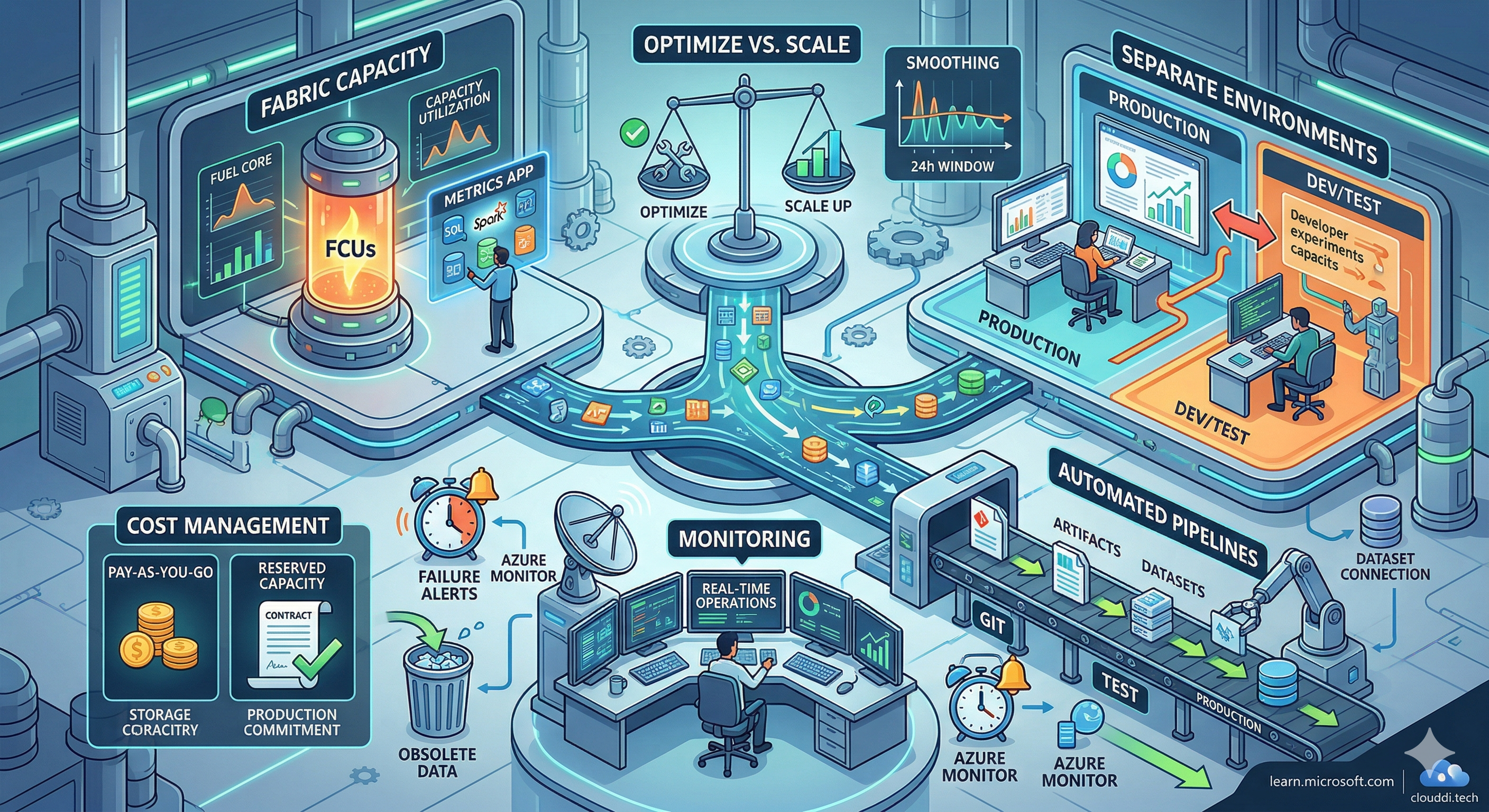

As you move from proof-of-concept into production with Fabric, there are important operational considerations to ensure cost efficiency, performance, and reliability. Fabric introduces a unique capacity-based model (via Fabric Capacity Units, FCUs) and brings DevOps principles to data.

Understand and Optimize Fabric Capacity

Fabric runs on a capacity model — you purchase a certain capacity which provides a pool of compute for all your Fabric workloads. It is essential to monitor and optimize capacity utilization. Fabric provides a Capacity Metrics app that breaks down usage by workloads (SQL, Spark, etc.) and items.

When approaching capacity limits, you have choices: optimize your workload or increase capacity. Optimizing might mean tuning queries, caching data, or using Fabric features like smoothing (Fabric automatically spreads heavy background workloads over a 24-hour window to reduce peaks).

Workload Separation for Reliability

A common pitfall is running experiments in the same capacity that is serving production reports — a heavy dev query slows down a critical business report. The recommendation is dedicated workspaces for Production and for Dev/Test, ideally on separate Fabric capacities.

CI/CD and Deployment Pipelines

Treat Fabric artifacts (reports, datasets, notebooks) like code. Use Fabric’s Deployment Pipelines for controlled promotion from Dev to Test to Production. Deployment Pipelines will manage things like swapping connections. Also consider source controlling your Fabric code — you can save notebooks in Git for version control and auditability.

Monitoring and Cost Management

- Route Fabric logs to Azure Monitor Log Analytics and set alerts on critical pipeline failures (e.g., if nightly ETL fails) so on-call can fix it quickly

- Use the Monitoring Hub in Fabric to see current operations and their status in real-time

- Leverage the Admin monitoring workspace for tenant-level views of activity

- Evaluate reserved capacity (annual commit) for predictable production workloads — more cost-efficient than pay-as-you-go once baseline usage stabilizes

- Manage OneLake storage by retiring obsolete data — no need to keep old data around forever

Frequently Asked Questions

What is Microsoft Fabric and how is it different from Power BI or Azure Synapse?

Microsoft Fabric is a unified SaaS analytics platform that brings together capabilities previously spread across Power BI, Azure Synapse Analytics, Azure Data Factory, and Azure Data Explorer into a single, integrated experience. Unlike using these as separate services, Fabric provides one licensing model, one OneLake data foundation, and a unified governance layer — dramatically reducing the operational complexity of running a modern data platform.

What is OneLake in Microsoft Fabric?

OneLake is Microsoft Fabric’s built-in, tenant-wide data lake — conceptually similar to OneDrive but for organizational data. Every Fabric tenant gets exactly one OneLake automatically. Data stored in OneLake in open Delta Parquet formats can be accessed by every Fabric workload (Spark, SQL, Power BI) without copying. External data in Azure, AWS S3, or other storage can be brought in via shortcuts — virtually — without duplication.

Does Microsoft Fabric replace Azure Synapse Analytics?

Microsoft Fabric incorporates and evolves the core capabilities of Azure Synapse Analytics — Spark-based data engineering, SQL-based data warehousing, and pipelines — within a unified SaaS platform. While Synapse remains available, Microsoft’s strategic direction is Fabric. Organizations planning new data platform investments are generally recommended to build on Fabric, and Microsoft is actively providing migration guidance for existing Synapse users.

How does Copilot in Microsoft Fabric help data engineers and analysts?

Copilot is embedded across multiple Fabric experiences. In Notebooks, it generates Spark and Python code from natural language prompts, completes code intelligently, explains errors, and writes markdown documentation. In Power BI, Copilot generates reports and DAX measures from natural language descriptions. In Data Factory, it assists with pipeline design. The overall effect is significant developer velocity — tasks that previously required hours of manual coding can be drafted in minutes and refined iteratively.

Conclusion

Microsoft Fabric truly aims to be “the data platform you need now” — addressing the pain points of the past (silos, complexity, slow development cycles) with a new unified approach. Its integrated OneLake foundation, multi-workload flexibility, native Copilot acceleration, and built-in Purview governance make it a compelling choice for the modern data-driven enterprise in 2026 and beyond.

Whether you are starting a new data platform or modernizing a fragmented stack of ETL tools and separate warehouses, Fabric provides a path to faster insights, lower operational overhead, and a foundation ready for AI at scale.

Your Next Steps

Start a 2-Week Fabric Proof-of-Concept: Kick the tires on Fabric in your own environment. Identify a small project (e.g., unify one data source and create one insightful report) and deliver it on Fabric. With the right scope, you can demonstrate value in two weeks by replacing a fragmented process with a unified Fabric solution.

Assess Your Fabric Readiness: Ensure your organization is ready to adopt Fabric with proper guidance. This covers governance setup (Purview integration, security roles), capacity planning, and identifying candidate workloads to migrate — so you can plan your Fabric rollout with fewer surprises.

Explore Copilot Hands-On: Get hands-on with Copilot — try practical prompts in Fabric notebooks or Power BI. Learn by doing: ask Copilot to create a visualization or write a query. It is the best way to experience the productivity boost firsthand.

If your organization is exploring Microsoft Fabric adoption, ARC can help with architecture design, workload migration, governance setup, and AI-ready data platform implementation.

Al Rafay Consulting

ARC Team

AI-powered Microsoft Solutions Partner delivering enterprise solutions on Azure, SharePoint, and Microsoft 365.

LinkedIn Profile