Microsoft Foundry Developer Use: Deploy Custom LLMs Fast

Microsoft Foundry Developer Use is microsoft Foundry accelerates custom LLM deployment with one-click endpoints, Azure integration, LLMOps practices, and enterprise security controls.

Learn how Microsoft Foundry accelerates custom LLM deployment with one-click endpoints, Azure integration, LLMOps practices, and enterprise security controls.

ARC Team

· Updated April 6, 2026 · ARC Team

Learn how Microsoft Foundry accelerates custom LLM deployment with one-click endpoints, Azure integration, LLMOps practices, and enterprise security controls.

Why Microsoft Foundry Matters for LLM Deployment

Many AI initiatives fail at the transition from prototype to production because infrastructure, governance, and deployment workflows are fragmented. Microsoft Foundry is designed to close this gap with a unified, enterprise-oriented platform.

Instead of stitching together separate services, teams can select models, configure endpoints, and operationalize workloads in one governed environment. This reduces engineering overhead and accelerates production outcomes.

- Unifies model access, deployment, and monitoring in one platform.

- Reduces infrastructure complexity for production LLM rollout.

- Supports enterprise governance from the first deployment.

- Shortens time-to-value from pilot to live use case.

Five-Step Deployment Pattern for Custom LLMs

The deployment workflow is straightforward: configure the Foundry environment, choose a catalog model, set deployment mode, provision resources, and integrate through API endpoints. This enables fast onboarding for both pilot and production teams.

Foundry supports OpenAI-compatible endpoint patterns, which helps teams reuse existing integration code with minimal changes. Secure key handling and policy enforcement remain essential for production readiness.

- Create Hub and Project with linked Azure resources.

- Select model from Microsoft, partner, or open model catalogs.

- Choose serverless or provisioned capacity based on traffic profile.

- Validate in playground, then integrate through governed API access.

Deep Azure and Enterprise Integration

A major platform advantage is native integration with Azure services, Microsoft 365 workflows, and enterprise connectors. This allows teams to build complete AI solutions without leaving the Microsoft ecosystem.

Foundry supports grounded retrieval patterns, automation triggers, and observability pipelines that connect directly into enterprise operations. As a result, AI capabilities can be embedded where users already work.

- Combine LLMs with Azure cognitive, search, and data services.

- Enable RAG through enterprise knowledge sources and citations.

- Publish AI capabilities into Teams, Outlook, and Power Platform.

- Use built-in telemetry with Azure Monitor and Application Insights.

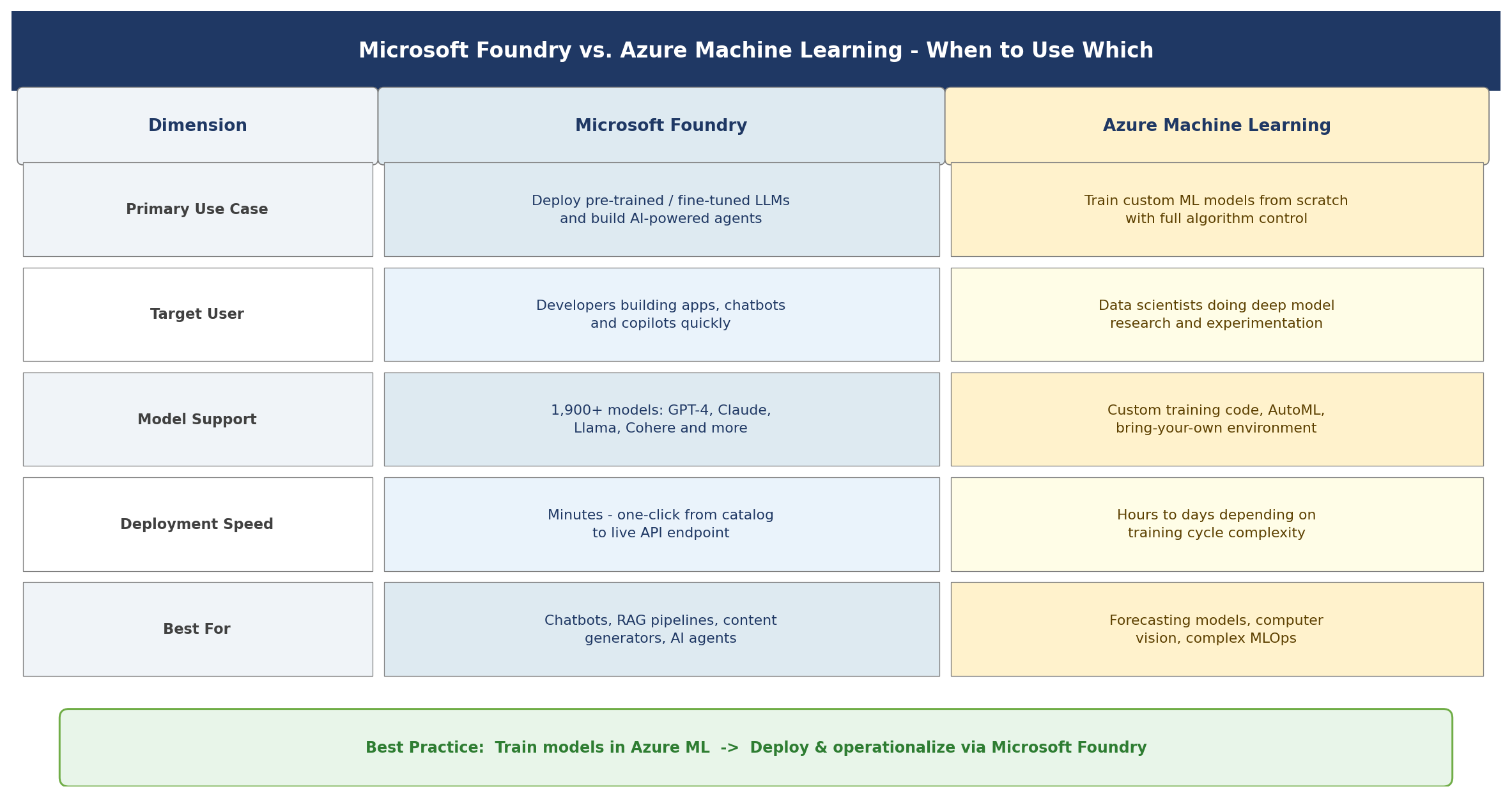

Foundry vs Azure ML and Strategic Value

Foundry and Azure ML are complementary rather than competing in many architectures. Foundry is best for fast GenAI app and agent deployment, while Azure ML is stronger for custom model research and full ML lifecycle experimentation.

For decision makers, Foundry delivers value through faster launch cycles, stronger productivity, lower operational overhead, and compliance-by-default controls. This makes it a practical enterprise platform for scaling GenAI responsibly.

- Use Foundry for rapid GenAI productization and agent workflows.

- Use Azure ML for advanced experimentation and custom training pipelines.

- Combine both when training and deployment responsibilities differ.

- Measure value through speed, quality, governance, and cost metrics.

Frequently Asked Questions

What is Microsoft Foundry?

Microsoft Foundry is an Azure-based platform for building, deploying, and managing LLMs and AI agents with enterprise-grade governance, security, and observability.

How do I deploy a custom LLM quickly?

Create a Foundry Hub and Project, select a model in Models + endpoints, choose serverless or provisioned deployment, and publish an API endpoint for app integration.

Can Foundry work with GPT and Claude models?

Yes. Foundry supports multiple model providers, including OpenAI and Anthropic options, alongside open-source models in the broader catalog.

When should I use Foundry instead of Azure Machine Learning?

Use Foundry when you need rapid deployment of GenAI apps and agents; use Azure ML for deeper custom training and full data science lifecycle workflows.

Conclusion

This guide outlines the practical path to implement this capability in an enterprise environment with speed, control, and measurable outcomes.

Get Started

- Talk with our team to assess your current architecture and use-case readiness

- Prioritize one high-impact pilot and define success metrics

- Deploy with governance, monitoring, and a scale-ready operating model

ARC Team

ARC Team

AI-powered Microsoft Solutions Partner delivering enterprise solutions on Azure, SharePoint, and Microsoft 365.

LinkedIn Profile