Fine-Tune GPT-4o on Azure AI Foundry | LLM Fine-Tuning Developer Guide

Fine-Tune GPT-4o on Azure AI Foundry | LLM Fine-Tuning Developer Guide is a practical guide to fine-tuning GPT-4o on Azure AI Foundry, covering data prep, training settings, safety checks, deployment, and production optimization.

A practical guide to fine-tuning GPT-4o on Azure AI Foundry, covering data prep, training settings, safety checks, deployment, and production optimization.

ARC Team

· Updated April 6, 2026 · ARC Team

A practical guide to fine-tuning GPT-4o on Azure AI Foundry, covering data prep, training settings, safety checks, deployment, and production optimization.

Why Fine-Tune GPT-4o in Azure AI Foundry

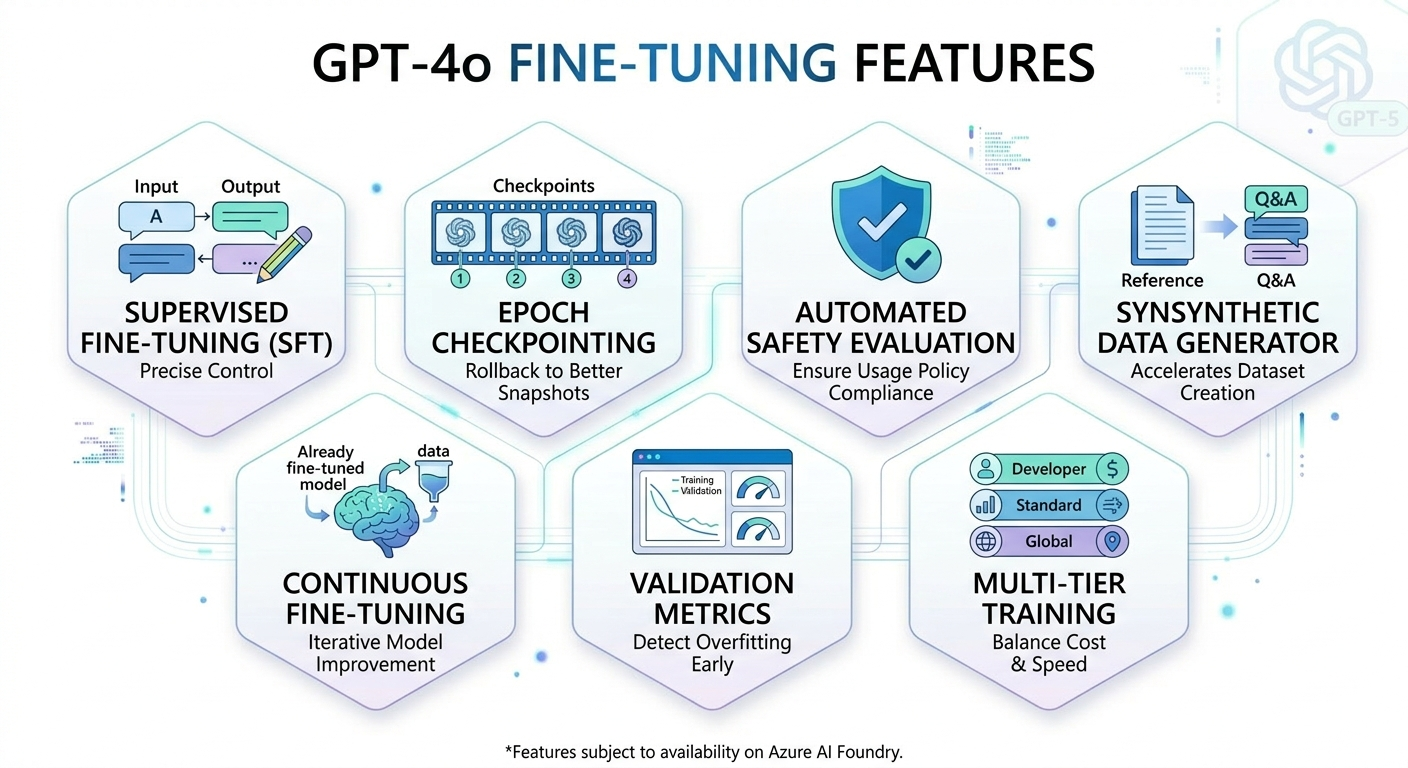

Azure AI Foundry provides a governed workspace for building and deploying AI applications, while GPT-4o offers strong multimodal performance and enterprise-grade flexibility. Fine-tuning becomes useful when prompt-only approaches are inconsistent, expensive, or difficult to scale.

By training on curated examples, teams can enforce preferred tone, structured output formats, and domain-specific behavior. This is especially important in customer-facing and regulated workflows where consistency is a business requirement.

- Improves output consistency beyond prompt engineering alone.

- Reduces prompt complexity and token overhead in production.

- Supports domain-specific behaviors at scale.

- Fits enterprise governance and monitoring requirements.

End-to-End Fine-Tuning Workflow

A typical flow includes data preparation, job configuration, training, evaluation, and deployment. Training datasets must follow JSONL chat format, and higher-quality examples generally produce better behavior than larger but noisy datasets.

Azure supports managed training runs with checkpointing and built-in safety screening before deployment. This helps teams validate quality and policy conformance before exposing models to production workloads.

- Use JSONL chat format with role-structured examples.

- Start with a baseline run, then iterate using checkpoints.

- Track training and validation metrics for overfitting signals.

- Block deployment when safety thresholds are not met.

Hyperparameters, Tiers, and Cost-Performance Tradeoffs

Hyperparameters such as epochs, learning-rate multiplier, and batch size directly influence model quality and training cost. Most teams benefit from conservative starting settings and incremental tuning based on evaluation outputs.

Tier selection should match deployment intent: Developer for rapid testing, Standard for production stability, and Global for queue optimization in larger or time-sensitive programs.

- Use 2-4 epochs as an initial practical range for many workloads.

- Adjust learning rate carefully to avoid unstable convergence.

- Choose tier based on throughput, latency, and governance needs.

- Set reproducibility seed values for auditability and repeatable experiments.

From Pilot to Production

Production readiness requires more than a successful training job. Teams need role-based access controls, secure data handling, endpoint monitoring, and a repeatable evaluation framework tied to business outcomes.

A staged rollout with defined acceptance criteria, monitoring dashboards, and periodic refresh cycles helps maintain quality over time. Continuous fine-tuning can then incorporate new examples without full retraining from scratch.

- Define success metrics before deployment.

- Test with golden prompts and failure-case suites.

- Instrument endpoint usage, latency, and quality drift.

- Use iterative retraining cycles to maintain domain relevance.

Frequently Asked Questions

How much data is needed to fine-tune GPT-4o?

Azure allows jobs with as few as 10 examples, but practical quality improvements usually require at least around 50 high-quality, diverse samples.

How is fine-tuning different from prompt engineering?

Prompting guides behavior at runtime, while fine-tuning updates model behavior through supervised training so preferred patterns are embedded in the model.

What happens if training data violates policy?

Azure screens data and can reject jobs that exceed safety thresholds. In those cases, the run is blocked before deployment and not charged at that stage.

Can a fine-tuned model be improved later?

Yes. Continuous fine-tuning lets teams build on previously tuned models with new datasets, enabling iterative quality improvement over time.

Conclusion

This guide outlines the practical path to implement this capability in an enterprise environment with speed, control, and measurable outcomes.

Get Started

- Talk with our team to assess your current architecture and use-case readiness

- Prioritize one high-impact pilot and define success metrics

- Deploy with governance, monitoring, and a scale-ready operating model

ARC Team

ARC Team

AI-powered Microsoft Solutions Partner delivering enterprise solutions on Azure, SharePoint, and Microsoft 365.

LinkedIn Profile